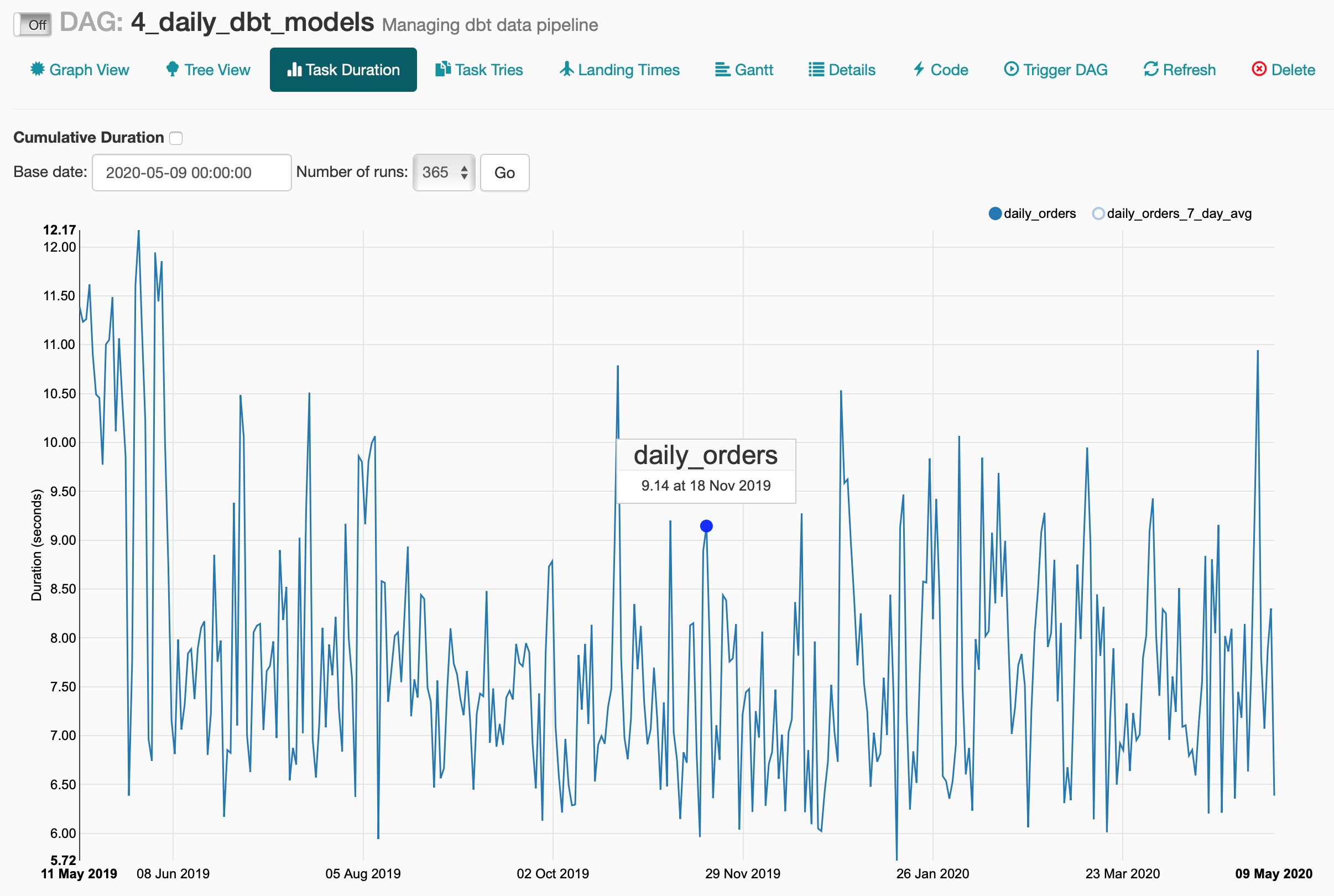

From few years trends are changing towards more specialized services like transformation, orchestration, testing, UI etc. Keep aspect is the parsing of manifest.Traditional data transformation tools are usually all-in-one monolithic platforms that lacks customization and flexibility. The dag.py file contains all the handling of the DBT models.The initialise_data.py file contains the upfront data loading operation of the seed data.Changes on them appear after a few seconds in the Airflow admin. Alternatively you can docker-compose down & docker-compose rm & docker-compose up. It is suggested to connect to the container ( docker exec. You can make changes to the dbt models from the host machine, dbt compile them and on the next DAG update they will be available (beware of changes that are major and require -full-refresh).In general attaching to the container, helps a lot in debugging. This will open a session directly in the container running Airflow. Attach to the container by docker exec -it dbt-airflow-docker_airflow_1 /bin/bash.If you make changes to the models, you need to re-compile them. On Airflow startup the existing models are compiled as part of the initialisation script.airflow) are defined as volumes in docker-compose.yml, they are directly accessible from within the containers. Project Notes and Docker Volumesīecause the project directories (. This will attach your terminal to the selected container and activate a bash terminal. If you need to connect to the running containers, use docker-compose ps to view the running services.įor example, to connect to the Airflow service, you can execute docker exec -it dbt-airflow-docker_airflow_1 /bin/bash. Since only the Airflow service is based on local files, this is the only image that is re-build (useful if you apply changes on the. Re-build the services: docker-compose build Re-builds the containers based on the docker-compose.yml definition.Delete the services: docker-compose rm Ddeletes all associated data.Disable the services: docker-compose down Non-destructive operation.Enable the services: docker-compose up or docker-compose up -d (detatches the terminal from the services' log).If everything goes well, you should have the daily model execute successfully and see similar task durations as per below.įinally, within Adminer you can view the final models. This will force Ariflow to backfill all date for those dates. The starting date is set on Jan 6th, 2019. 4_daily_dbt_models: Schedule the daily models.3_snapshot_dbt_models: Build the snapshot tables.2_init_once_dbt_models: Perform some basic transformations (i.e.1_load_initial_data: Load the raw Kaggle dataset.You will need to run to execute them in correct order. You will be presented with the list of DAGs, all Off by default. Once everything is up and running, navigate to the Airflow UI (see connections above). Adminer UI: Credentials as defined at docker-compose.yml.

airflow: Python-based image to execute Airflow scheduler and webserver.postgres-dbt: DB for the DBT seed data and SQL models to be stored.postgres-airflow: DB for Airflow to connect and store task execution information.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed